Vulnerability Management and Web App Scanning

Tenable Vulnerability Management and Tenable Web App Scanning (WAS) are cloud-based solutions that provide industry-leading visibility across your entire attack surface. With advanced predictive analytics, these products enable prioritized, risk-based remediation—supporting a complete vulnerability management lifecycle.

Validation Criteria

Your integration with Tenable Vulnerability Management and Tenable Web App Scanning should meet the following criteria:

- The integration must support bidirectional asset synchronization if the integration uses an asset model.

- Ensure that all API calls from your integration use a standard User-Agent string as outlined in the User-Agent Header guide. This enables Tenable to identify your integration's API calls to assist with debugging and troubleshooting.

- Contact Tenable via the Tech Alliances Application to validate your third-party integration with Tenable's product or platform.

- Explain how your integration uses Tenable's API and the specific endpoints utilized. Tenable may request read access to your integration's codebase to validate scalability and recommend best practices.

- Ensure that your integration uses the proper naming conventions, trademarks, and logos in your integration's user interface. You can download a Media Kit on Tenable's media page.

Data Model

Tenable Vulnerability Management and Tenable Web App Scanning use a discrete asset model with the following data types that are referred to throughout this guide:

- Asset—An asset is an entity of value on a network that can be exploited. An asset can be anything, including laptops, desktops, servers, routers, printers, mobile phones, virtual machines, software containers, web applications, and cloud instances.

- Plugin—A plugin is a vulnerability definition used to detect a vulnerability on an asset. Vulnerability definitions are also sometimes referred to as vulnerability signatures.

- Finding—A finding is a single instance of a vulnerability appearing on an asset, identified uniquely by plugin ID, port, and protocol. For example:

We discovered plugin 12345 on asset ABCD on port 1234.

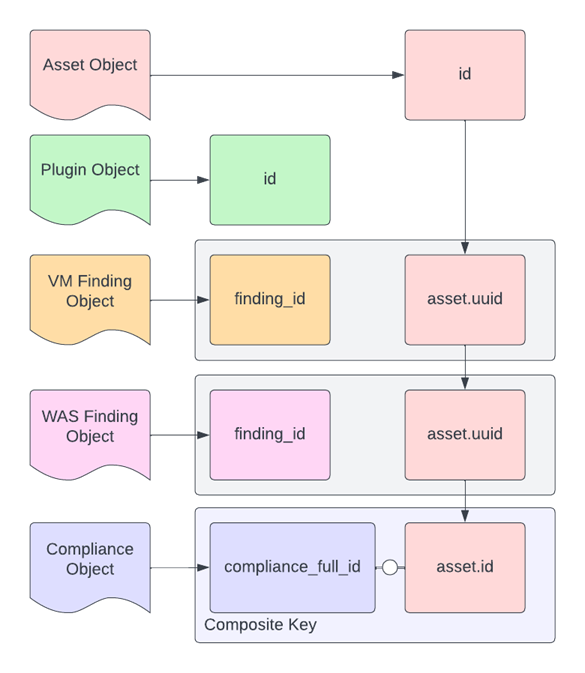

The following diagram illustrates the relationships between these three data types.

Several plugins can detect vulnerabilities on several assets, and a single plugin might detect vulnerabilities on the same asset but on different ports, so you can only uniquely identify a single finding by using a composite key (as shown in the data model diagram) with these properties:

- asset.uuid

- plugin.id

- port.port

- port.protocol

Compliance findings, on the other hand, use a simple composite key of check_id and asset_uuid.

- check_id

- asset_uuid

Data Export Workflow

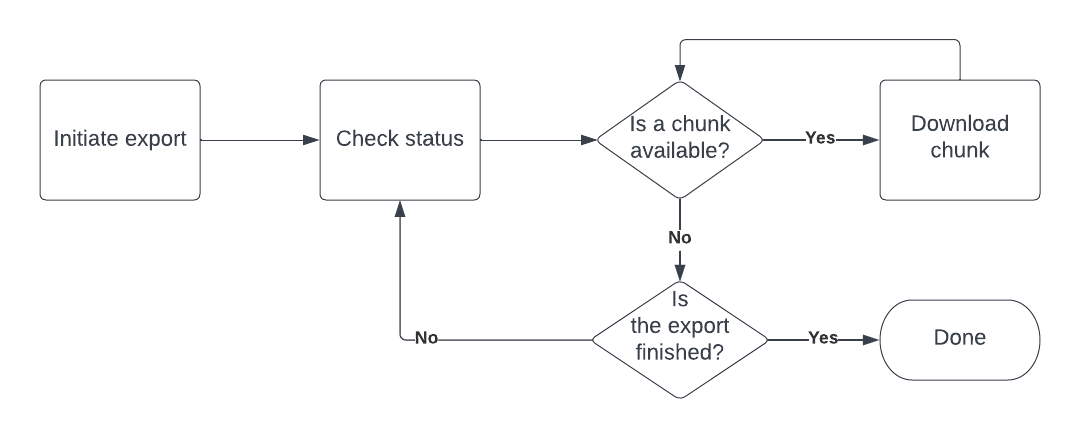

Tenable Vulnerability Management and Tenable Web App Scanning use an asynchronous job-based API for the exportation of asset, vulnerability, and compliance data from the platform. A high-level workflow for exporting data from these APIs is illustrated in the following diagram.

- Initiate an export—Initiate a request to start an export job using your desired filters and job specification. The Vulnerability Management API returns an export job UUID when you initiate the request. You can use the following endpoints to initiate an export request.

- Check status of export job—Check the status of the export job and look for any chunks that are available. You can use the following endpoints to check the status of the export job.

- Download export data chunk—Download a chunk of data from the export. You can use the following endpoints to download data chunks.

Your third-party integration should pull data from Vulnerability Management and Web App Scanning in an efficient manner. Because the dataset is stateful, full exports are unnecessary. Export only the data relevant to your integration to reduce processing overhead. You can easily export only the delta from the last run and maintain parity with Vulnerability Management without having to reprocess the same data by implementing the recommendations in the following section.

Asset Exports

Asset metadata is critical to maintaining the state of vulnerability findings since all findings are linked to an asset. It's possible to have orphaned findings if the asset state isn't taken into account. For example, if an asset was terminated or deleted from Vulnerability Management then the vulnerability findings associated with that asset no longer exist and the findings never transition to a closed state.

Assets themselves can exist in three distinct states:

- Active—The default state of an asset. Active assets are live assets on a network.

- Deleted—The state that occurs when an asset is deleted from the Vulnerability Management platform. Deleted assets have their vulnerability information deleted and the findings don't transition to a closed state.

- Terminated—The state that occurs when an asset has been terminated in a cloud environment. If Vulnerability Management is connected to a cloud platform via a cloud connector, the connector relays created and terminated assets to Vulnerability Management and replicates that information within the platform. Terminated assets do not have their associated findings transitioned to a closed state.

Asset Export Request

Tenable recommends that you consider the following filters and settings (parameters) when you request an asset export.

NoteThe following filter and settings recommendations assume the user is using the Export assets v2 endpoint. The superseded Export assets v1 endpoint does not support the

sourcesortypesfilter.

- chunk_size—Specifies the number of assets per exported chunk. The range is

100to10000. If this parameter is omitted, Vulnerability Management uses the default value of100. Tenable recommends that you use a size of4000to5000for the best performance. - include_open_ports—If set to

true, includes open port findings from info-level plugins for each asset. However, note that this data can be highly variable and dramatically increase the size of the asset export response data. Additionally, Tenable does not recommend including both open port findings and resource tags in the same export. - include_resource_tags—If set to

true, includes cloud provider resource tags in the asset objects. However, as with open port data, this information can be highly variable and has the potential to dramatically increase the asset export sizes. Additionally, Tenable does not recommend including both open port findings and resource tags in the same export. - filters.since—Returns all assets that were updated, deleted, or terminated since the specified date regardless of state. The date must be specified in the Unix timestamp format.

- filters.sources—Returns assets that have any of the sources specified. All sources are returned if left unspecified.

- filters.types—Specifies the type of asset data to return. The supported types are

hostandwebapp. If this parameter is omitted, Tenable Vulnerability Management uses the default valuehost.

TipFor a full list of asset export parameters, see the Export assets v2 endpoint.

Example asset export request

The following is an example asset request with a chunk_size of 4000 and a since filter:

POST /assets/v2/export

{

"chunk_size": 4000,

"filters": {

"since": 1234567890

}

}Example pyTenable code snippet for an asset export request

The following is an example pyTenable code snippet for an asset export request with a chunk_size of 4000 and a since filter. For more information about asset exports with pyTenable, see Exports in the pyTenable documentation.

from tenable.io import TenableIO

tvm = TenableIO(access_key='abc', secret_key='def')

for asset in tvm.exports.assets_v2(since=1234567890, chunk_size=4000):

print(asset)Response Chunk Properties

The following are important asset export chunk response properties for the asset export v2 data model to note:

- id—The UUID of the asset stored within Tenable Vulnerability Management. Use this value as the unique key for the asset.

- types—The type of asset returned,

hostorwebapp. - sources—The source that identified the asset and its details.

- scan.first_scan_time—The date and time the asset was first scanned.

- scan.last_scan_time—The date and time the asset was last scanned.

- scan.last_authenticated_scan_date—The time and data a credentialed scan was last performed on the asset.

- network.ipv4s—Unsorted list of IPv4 addresses associated with the asset.

- network.ipv6s—Unsorted list of IPv6 addresses associated with the asset.

- network.mac_addresses—Unsorted list of MAC addresses associated with the asset.

- network.hostnames—Unsorted list of hostnames associated with the asset.

- network.network_interfaces—List of associated network attributes as assigned to each interface.

- open_ports—List of discovered open ports and the associated metadata for each port.

- timestamps.created_at—The date and time when the asset was created.

- timestamps.updated_at—The date and time when the asset was last updated.

- timestamps.deleted_at—The date and time when the asset was deleted.

- timestamps.terminated_at—The date and time when the asset was terminated from a cloud platform.

- acr_score—The Asset Criticality Rating (ACR) for the asset.

- exposure_score—The Asset Exposure Score (AES) for the asset.

- ratings.acr.score—The Asset Criticality Rating (ACR) v3 score.

- ratings.aes.score—The Asset Exposure Score (AES) v2 score.

TipFor a full list of asset export chunk response properties, see the Download assets chunk endpoint.

Vulnerability Exports

The exportation of vulnerability findings is one of the most common use cases for the export API. The findings objects within the export data contain a multitude of information combined into a singular monolithic finding. While the monolithic structure of each vulnerability finding may seem convenient, relying on it can lead to inaccurate or outdated data due to embedded sub-objects. You should avoid this due to the following issues:

- The

assetsub-object within the finding is only there for backwards compatibility. Aside from theasset.uuidattribute, the rest of the data is simply a best guess since the asset sub-object stored within the finding doesn't account for multiple IP addresses, DNS addresses, etc. - The

pluginsub-object within the finding is only as current as the last observation of the finding. This means that if the plugin metadata, such as the vulnerability priority rating or the CVSS score, was updated since the finding was last observed then thepluginsub-object will not reflect those updates.

Vulnerability findings can exist within one of the following three states:

- Open—Findings that Tenable has determined to be active on the host.

- Fixed—Findings that have been remediated and have since transitioned from the active state to a resolved state.

- Reopened—Findings that were fixed but have been re-observed as an open finding.

NoteThe API uses different terms for vulnerability states than the user interface. The new and active states in the user interface map to the open state in the API. The resurfaced state in the user interface maps to the reopened state in the API. The fixed state is the same.

Export chunks for vulnerability findings are compiled differently than asset export chunks. Unlike asset export chunks, vulnerability findings chunks are compiled in a multi-step process.

- The job handler searches for assets that match the asset-related filters and allocates them to different chunks.

- Each chunk is independently processed per the vulnerabilities associated with the assets within that chunk.

This multi-step process means that there can be a high degree of variability in the data sizes of each vulnerability findings chunk. Some might have no data and some might be fully populated with the specified number of assets per finding. Empty chunks of data (chunks without any findings) are automatically dropped from the export; however, these empty chunks are still included in the count of total chunks that were processed. This disparity is a common point of confusion so it's important to remember that the total chunks processed are not equal to the total number of chunks available.

When you create the vulnerability findings export you can define the number of assets per check via the num_assets parameter. Additionally because of the multi-step process described above, if you intend to pull findings data for assets past their licensable period (generally 90 days), then you should use the include_unlicensed parameter when you create the export request.

Vulnerability Export Request

Tenable recommends that you consider the following parameters when creating a vulnerability findings export request.

- num_assets—Specifies how many assets of findings are collected within each chunk of the export. The default is

50and the maximum is5000. Tenable recommends between1000to3000for the best performance. - include_unlicensed—Specifies whether or not to include unlicensed assets. Setting this parameter to

truereturns both licensed and unlicensed asset findings. - filters.severity—Specifies the severity of the vulnerabilities to include in the export. Tenable recommends that you set this parameter to

["medium", "high", "critical"]unless you need informational (non-vulnerability) or low severity (CVSS score below 4.0) findings. - filters.since—Returns findings that have been observed since the specified Unix timestamp. This parameter uses the appropriate attribute for each findings state so it's recommended over discrete state filters.

- filters.state—Specifies the state of the vulnerability findings that you want to include in the export. By default, Vulnerability Management only returns open and reopened findings so you need to specify all three states if you want both open and closed findings. For example,

["open", "fixed", "reopened"].

TipFor a full list of asset export parameters, see the Export vulnerabilities endpoint.

Example vulnerability export request

The following is an example vulnerability export request for both licensed and unlicensed asset with since, state, and severity filters:

POST /vulns/export

{

"num_assets": 500,

"include_unlicensed": true,

"filters": {

"since": 1234567890,

"state": ["OPEN", "REOPENED", "FIXED"],

"severity": ["LOW", "MEDIUM", "HIGH", "CRITICAL"]

}

}Example pyTenable code snippet for a vulnerability export request

The following is an example pyTenable code snippet for a vulnerability export request or both licensed and unlicensed asset with since, state, and severity filters. For more information about vulnerability exports with pyTenable, see Exports in the pyTenable documentation.

from tenable.io import TenableIO

tvm = TenableIO(access_key='abc', secret_key='def')

for finding in tvm.exports.vulns(since=1234567890,

state=['open', 'reopened', 'fixed'],

severity=['low', 'medium', 'high', 'critical'],

num_assets=500,

include_unlicensed=True,

):

print(finding)Response Chunk Properties

The following are important vulnerability export chunk response properties to note.

- finding_id—The unique identifier for the finding.

- first_found—The date and time a scan first observed the finding on the asset.

- last_fixed—The date and time a scan no longer detected the previously detected finding on the asset.

- last_found—The date and time a scan last observed the finding on the asset.

- indexed—The date and time that the finding was last updated internally.

- output—Plugin output for the specific finding.

- severity—The severity of the vulnerability as defined using the Common Vulnerability Scoring System (CVSS) base score.

- severity_default_id—The default severity risk id (0-5). This value is assigned by Tenable to indicate the risk.

- severity_id—The finding severity risk id (0-5). This value is assigned by the customer to indicate recast risk.

- severity_modification_type—Indicates if the finding undergone any modifications. Possible values are

none,accepted, andrecast. - state—The state of the finding. Possible values are

open,reopened, orfixed. - asset.uuid—The identifier for the asset associated with the finding.

- plugin.id—The identifier of the plugin that identified the finding.

- plugin.cve—The Common Vulnerability and Exposure (CVE) IDs associated with the plugin.

- plugin.xrefs—References to third-party information about the vulnerability, exploit, or update associated with the plugin.

- plugin.name—The name of the plugin that identified the finding.

- plugin.description—The plugin description for the plugin that identified the finding.

- plugin.solution—The solution text for the plugin that identified the finding.

- plugin.synopsis—The plugin synopsis for the plugin that identified the finding.

- plugin.cvss3_base_score—The CVSS version 3 base score associated with the plugin.

- plugin.cvss_base_score—The CVSS version 2 base score associated with the plugin.

- plugin.vpr.score—The Vulnerability Priority Rating (VPR) associated with the plugin.

- port.port—The port number the scanner used to communicate with the asset where the finding was identified.

- port.protocol—The protocol the scanner used to communicate with the asset where the finding was identified.

- port.service—The service the scanner used to communicate with the asset where the finding was identified.

TipFor a full list of vulnerability export chunk response properties, see the Download vulnerability chunk endpoint.

Compliance Exports

The logic used for compliance exports is less complex. Compliance export chunks are defined by the num_findings parameter and you can specify asset filters to narrow the scope of the results to only the asset UUIDs you're interested in.

Compliance Export Request

Tenable recommends that you consider the following parameters for compliance exports:

- num_findings—Specifies the number of compliance findings per exported chunk. The minimum is

50, the maximum is10000, and the default is5000. - asset—An array of asset UUIDs to narrow the export results. If this parameter is unspecified, the export includes findings for all assets.

- filters.since—Filters the export results to include findings that have been updated since the specified timestamp.

TipFor a full list of compliance export parameters, see the Export compliance data endpoint.

Example compliance export request

The following is an example compliance export request with a num_findings of 5000 along with a since and state filter:

POST /compliance/export

{

"num_findings": 5000,

"filters": {

"since": 1234567890,

"state": ["OPEN", "REOPENED", "FIXED"]

}

}Example pyTenable code snippet for a compliance export request

The following is an example pyTenable code snippet for a compliance export request with a num_findings of 5000 along with a since and state filter. For more information about compliance exports with pyTenable, see Exports in the pyTenable documentation.

from tenable.io import TenableIO

tvm = TenableIO(access_key='abc', secret_key='def')

for finding in tvm.exports.compliance(since=1234567890, state=['open', 'reopened', 'fixed']):

print(finding)Response Chunk Properties

The following are important compliance export chunk response properties to note.

- compliance_full_id—A unique identifier that identifies a full compliance result in the context of an audit. The identifier is a hash of fields within the compliance check (excluding external references). The identifier changes if any of the fields within the compliance check change.

- compliance_control_id—A unique identifier for the aggregation of multiple results to single recommendations in CIS and DISA audits. This identifier is a computed and hashed value for CIS and DISA content that enables customers to match checks that evaluate the same recommendation within a benchmark.

- compliance_functional_id—A unique identifier for aggregating or comparing compliance results that were tested the same way. The identifier is a hash of the code within the audit that actually performs the check. The identifier changes if functional evaluation of the audit changes.

- compliance_informational_id—A unique identifier for aggregating or comparing compliance results that have the same informational data. For example, the same solution text. The identifier is a hash of the info and solution fields within the compliance check. The identifier changes if either of these fields are updated.

- asset.id—The identifier of the asset associated with finding.

- audit_file—The audit file (checklist) that the finding is associated with.

- check_info—Details about the compliance check what was performed.

- check_name—The name of the compliance check.

- compliance_benchmark_name—The name of the compliance benchmark. For example,

CIS SQL Server 2019. - compliance_benchmark_version—The version of the compliance benchmark.

- expected_value—The desired value (integer or string) for the compliance check. For example, if a password length compliance check requires passwords to be 8 characters long then

8is the expected value. For manual checks, this field will contain the command used for the compliance check. - actual_value—The actual value (integer, string, or table) evaluated from the compliance check. For example, if a password length compliance check requires passwords to be 8 characters long, but the evaluated value was 7 then

7is the actual value. For manual checks, this field will contain the output of the command that was executed. - first_seen—The date and time when the compliance finding was first observed.

- indexed_at—The date and time when the compliance finding was last updated internally.

- last_fixed—The date and time when the compliance finding was last fixed.

- last_seen—The date and time when the compliance finding was last assessed on the asset.

- reference—List of industry references, including frameworks and controls, relating to the compliance check.

- see_also—Links to external websites that contain reference information about the compliance check.

- solution—Remediation information for the compliance check.

- status—The compliance finding status. Possible values include

PASSED,FAILED,WARNING,SKIPPED, orUNKNOWN.

TipFor a full list of compliance export chunk response properties, see the Download compliance chunk endpoint.

Web App Scanning Exports

Exporting findings from Web App Scanning (WAS) requires dedicated export APIs due to fundamental differences in data structure. The WAS API uses a distinct data schema and endpoint structure tailored to the nuances of web application security. While the data format differs from that of Vulnerability Management findings, the overall export workflow remains generally the same.

WAS Export Request

Tenable recommends that you consider the following parameters when creating a WAS findings export request.

- num_assets—Specifies the number of assets used to chunk the findings. The default is

50and the maximum is5000. Tenable recommends between1000to3000for the best performance. - include_unlicensed—Specifies whether or not to include unlicensed assets. Setting this parameter to

truereturns both licensed and unlicensed asset findings. - filters.severity—Specifies the severity of the vulnerabilities to include in the export. Tenable recommends that you set this parameter to

["medium", "high", "critical"]unless you need informational (non-vulnerability) or low severity (CVSS score below 4.0) findings. - filters.since—Returns findings that have been observed since the specified Unix timestamp. This parameter uses the appropriate attribute for each findings state so it's recommended over discrete state filters.

- filters.state—Specifies the state of the vulnerability findings that you want to include in the export. By default, Web App Scanning only returns open and reopened findings so you need to specify all three states if you want both open and closed findings. For example,

["open", "fixed", "reopened"].

TipFor a full list of WAS export parameters, see the Export findings endpoint.

Example WAS export request

The following is an example WAS findings export request for both licensed and unlicensed assets with since, state, and severity filters:

POST /was/v1/export/vulns

{

"num_assets": 500,

"include_unlicensed": true,

"filters": {

"since": 1234567890,

"state": ["OPEN", "REOPENED", "FIXED"],

"severity": ["LOW", "MEDIUM", "HIGH", "CRITICAL"]

}

}Example pyTenable code snippet for a WAS finding export

The following is an example pyTenable code snippet for a WAS findings export request or both licensed and unlicensed asset with since, state, and severity filters. For more information about WAS exports with pyTenable, see WAS in the pyTenable documentation.

from tenable.io import TenableIO

tvm = TenableIO(access_key='abc', secret_key='def')

for finding in tvm.exports.was(num_assets=500,

since=1234567890,

include_unlicensed=True,

severity=['low', 'medium', 'high', 'critical'],

state=['open', 'reopened', 'fixed'],

):

print(finding)Response Chunk Properties

The following are important WAS findings export chunk response properties to note.

- finding_id—The unique identifier for the finding.

- url—The fully-qualified domain name or URL associated with the finding.

- input_type—The type of HTML form input associated with the finding.

- input_name—The type of page element that's vulnerable. For example, an HTML form.

- http_method—The HTTP method associated with the finding. For example, the GET or POST HTTP method.

- first_found—The date and time that the finding was first observed.

- last_fixed—The date and time that the finding was last fixed.

- last_found—The date and time that the finding was last observed.

- indexed_at—The date and time that the finding was last updated internally.

- output—The text output from the plugin that detected the finding.

- proof—The output from the web application corroborating that the finding is present.

- payload—The attack payload used to detect the finding.

- severity—The severity of the finding as defined using the Common Vulnerability Scoring System (CVSS) base score.

- severity_default_id—The default severity risk id (0-5). This value is assigned by Tenable to indicate the risk.

- severity_id—The finding severity risk id (0-5). This value is assigned by the customer to indicate recast risk.

- severity_modification_type—Indicates if the finding undergone any modifications. Possible values are

none,accepted, andrecast. - recast_reason—The text that appears in the Comment field of the recast rule in the Tenable Web App Scanning user interface.

- recast_rule_uuid—The identifier associated to the recast rule within the WAS application.

- state—The state of the finding. Possible values are

open,reopened, orfixed. - asset.uuid—The identifier for the asset associated with the finding.

- plugin.id—The identifier of the plugin that identified the finding.

- plugin.cve—The Common Vulnerability and Exposure (CVE) IDs associated with the plugin.

- plugin.cwe—The Common Weakness Enumeration (CWE) IDs associated with the plugin.

- plugin.owasp_2010—The OWASP Top 10 (2010) categories that this plugin relates to.

- plugin.owasp_2013—The OWASP Top 10 (2013) categories that this plugin relates to.

- plugin.owasp_2021—The OWASP Top 10 (2021) categories that this plugin relates to.

- plugin.owasp_api_2019—The OWASP API Top 10 (2019) categories that this plugin relates to.

- plugin.wasc—The Web Application Security Consortium (WASC) IDs for the plugin.

- plugin.xrefs—References to third-party information about the finding, exploit, or update associated with the plugin.

- plugin.name—The name of the plugin that identified the finding.

- plugin.description—The plugin description for the plugin that identified the finding.

- plugin.solution—The solution text for the plugin that identified the finding.

- plugin.synopsis—The plugin synopsis for the plugin that identified the finding.

- plugin.cvss3_base_score—The CVSS version 3 base score associated with the plugin.

- plugin.cvss_base_score—The CVSS version 2 base score associated with the plugin.

- vpr.score—The Vulnerability Priority Rating (VPR) associated with the plugin.

TipFor a full list of WAS findings export chunk response properties, see the Download findings export chunk endpoint.

Plugin Exports

Exporting plugin details from Vulnerability Management is different than exporting account-specific metadata. You can use the List plugins endpoint to retrieve a paginated list of plugin information.

Plugin Export Request

Tenable recommends that you consider the following parameters for plugin export requests:

- last_updated—Filters the response to include only plugins updated after the specified date. This filter accepts timestamps in ISO Date (YYYY-MM-DD) format instead of Unix format.

- size—The number of plugin records to include for each page.

- page—Specifies which page in the paginated data you want to retrieve. Pages start at 1.

Example plugin export request for plugins updated after 2022-12-01

GET /plugins/plugin?last_updated=2022-12-01&size=1000&page=1Example pyTenable code snippet for a plugin export request

For more information about plugin exports with pyTenable, see Plugins in the pyTenable documentation.

from tenable.io import TenableIO

tvm = TenableIO(access_key='abc', secret_key='def')

for plugin in tvm.plugins.list():

print(plugin)Plugin Response Properties

The following are important plugin export chunk response properties to note.

- data.plugin_details.id—The identifier of the plugin.

- family_name—The family (category) that the plugin belongs to.

- name—The name of the plugin.

- data.plugin_details.attributes.cve—The Common Vulnerability and Exposure (CVE) IDs associated with the plugin.

- data.plugin_details.attributes.cvss3_base_score—The CVSS version 3 base score associated with the plugin.

- data.plugin_details.attributes.cvss_base_score—The CVSS version 2 base score associated with the plugin.

- data.plugin_details.attributes.description—The plugin description for the plugin

- data.plugin_details.attributes.patch_publication_date—The date and time the vendor published a patch to address the vulnerability the plugin detects.

- data.plugin_details.attributes.plugin_modification_date—The date and time Tenable last updated the plugin.

- data.plugin_details.attributes.plugin_publication_date—The date and time Tenable first published the plugin.

- data.plugin_details.attributes.vuln_publication_date—The date and time that the vulnerability was originally published.

- data.plugin_details.attributes.risk_factor—The risk factor of the vulnerability associated with the plugin. The risk factor is determined based on the calculation of the CVSS score.

- data.plugin_details.attributes.solution—The solution text for the plugin.

- data.plugin_details.attributes.synopsis—A summary of the vulnerability the plugin detects.

- data.plugin_details.attributes.vpr.score—The Vulnerability Priority Rating (VPR) associated with the plugin.

- data.plugin_details.attributes.vpr.updated—The date and time the VPR score was last updated.

- data.plugin_details.attributes.xrefs—References to third-party information about the vulnerability, exploit, or update associated with the plugin.

TipFor a full list of plugin response properties, see the List plugins endpoint.

Asset Imports

You can import asset metadata to preload assets or to update assets within the Vulnerability Management platform. Asset metadata is processed using the same logic as scan data in order to ascertain if the asset exists or not. If the asset exists, the relevant action (create or update) is performed using the provided metadata, and the source presented in the import is appended to the asset as a source feeding the asset metadata.

The asset import API can be used to import asset data in JSON format. The maximum amount of data that can be sent in a single request cannot a 5 MB payload size. For an example of how to construct an asset import request, see Add Asset Data to Vulnerability Management in the Developer Portal.

Asset Import Request

The asset import endpoint accepts the following parameters:

- source—A user-defined name for the source of the import containing the asset records. While this parameter is a free-form string, Tenable recommends that you define the source formatted as a clear source identifier, such as "VendorName" or "VendorName ProductName".

- assets—The asset list is a list of asset objects to import into the Vulnerability Management platform. Each asset object requires at a minimum at least the

fqdn,ipv4,netbios_name, ormac_addressproperties. However, the more supported metadata that you include with an asset, the more likely a match can be made. For a list of supported properties, refer to Asset Attribute Definitions.

Example asset import request

POST /import/assets

{

"source": "VendorName Product",

"assets": [

{

"fqdn": ["example_one.py.test"],

"ipv4": ["192.168.1.100", "192.168.1.101"],

"netbios_name": "example_one",

"mac_address": ["00:00:00:11:22:33", "00:00:00:11:22:34"]

},

{

"fqdn": ["example_two.py.test"],

"ipv4": ["192.168.1.105"],

"netbios_name": "example_two",

"mac_address": ["00:00:00:11:22:35"]

}

]

}Example pyTenable code snippet for an asset import request

For more information about asset imports with pyTenable, see Assets in the pyTenable documentation.

from tenable.io import TenableIO

tvm = TenableIO(access_key='abc', secret_key='def')

job_id = tvm.assets.import('VendorName Product',

{

'fqdn': ['example_one.py.test'],

'ipv4': ['192.168.1.100', '192.168.1.101'],

'netbios_name': 'example_one',

'mac_address': ['00:00:00:11:22:33', '00:00:00:11:22:34']

},

{

'fqdn': ['example_two.py.test'],

'ipv4': ['192.168.1.105'],

'netbios_name': 'example_two',

'mac_address': ['00:00:00:11:22:35']

}

)Updated 3 months ago